The AbuseFilter extension for MediaWiki, which helps prevent vandalism on wikis, will be globally enabled on all Wikimedia projects later today.

AbuseFilter was developed by Andrew Garrett with support from the Wikimedia Foundation; it was first enabled on the English Wikipedia in March 2009.

Since then, many local wiki communities have asked individually for AbuseFilter to be turned on on their wiki. As of July 2011, AbuseFilter was already enabled on 66 wikis, out of the 843 wikis the Wikimedia Foundation hosts.

It recently appeared it would just be simpler to enable AbuseFilter by default on all wikis, rather than doing it on request.

When enabled, AbuseFilter comes with no built-in default filters, so no immediate change will be visible on wikis where it is enabled.

Contrary to other anti-vandalism tools, AbuseFilter works by analyzing edits before they’re saved, rather than trying to identify (and revert) them after the fact.

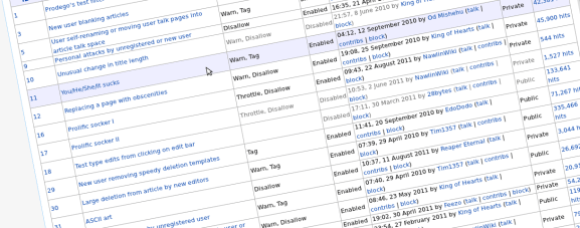

Filters, or “rules”, can be added to AbuseFilter to identify certain kinds of edits matching a pattern. Actions can be taken for these edits, like tagging the edit, preventing the user from saving the page, or even automatically blocking the user. The AbuseFilter documentation provides the format in which filters must be written.

Because AbuseFilter has been in use on the English Wikipedia for more than two years, more details about how AbuseFilter works are available in their documentation; Instructions on how to create a filter are also available.

It is possible to export filters from a wiki, and to import them into another one.

AbuseFilter is an extremely powerful tool, with the potential of preventing edits, blocking users, and making a whole wiki unusable. Therefore, it must be used with extreme caution; filters should only be created and edited by administrators who understand their purpose and syntax.

AbuseFilter can also be used to identify edits that are not abusive, for tracking purposes. Tags can be automatically added to edits matching a certain pattern, thus giving editors and patrollers a heads-up about certain edits (see examples).

Because such tags can also be used to identify legit edits, AbuseFilter is sometimes referred to as “Edit filter”.

AbuseFilter offers the possibility for certain filters to be private, to prevent long-time abusers from knowing how their edits are being identified.

We hope this tool will prove useful to our community of editors and patrollers.

Guillaume Paumier

Technical communications manager

Can you help us translate this article?

In order for this article to reach as many people as possible we would like your help. Can you translate this article to get the message out?

Start translation