Does a constant stream of new editors really make Wikipedia better? Increasing participation is one of the top five priorities in our strategic plan. But when we talk about retention of newly registered editors, some readers and experienced editors rightfully wonder exactly how many edits by newbies actually improve the free encyclopedia.

In the Community Department, we’re facilitating the WikiGuides pilot program on the English Wikipedia to reach out to new contributors and mentor them. To do that successfully, we must quickly identify which new editors are actually doing good work.

So one of our working questions is: How many contributions by new editors are made in good faith and are worth retaining or improving?

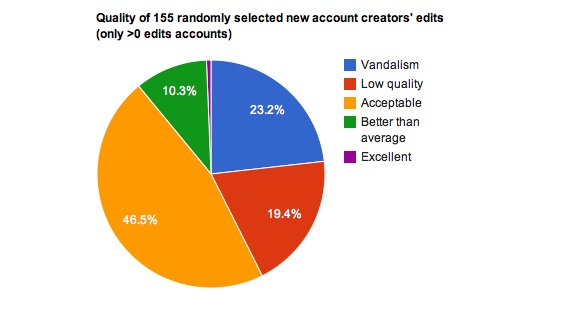

We took a randomly selected batch of 155 new registered users on the English Wikipedia who made at least one edit in mid-April of this year. We looked at their first edit and ranked it on a 1-5 scale, with 1 being pure vandalism and 5 being an edit that is excellent, meaning it adds a significant chunk of verified, encyclopedic content and would be indistinguishable from a very experienced editor. Here’s what that composition looks like:

So you can see that even with a very high standard for quality — we only handed out a single “5” edit — most new editors made contributions worth retaining in some way, even if they weren’t perfect. More than half of these first edits needed no reworking to be acceptable based on current Wikipedia policy. Another 19% made good faith edits but needed additional help to meet standards defined in policy or guideline.

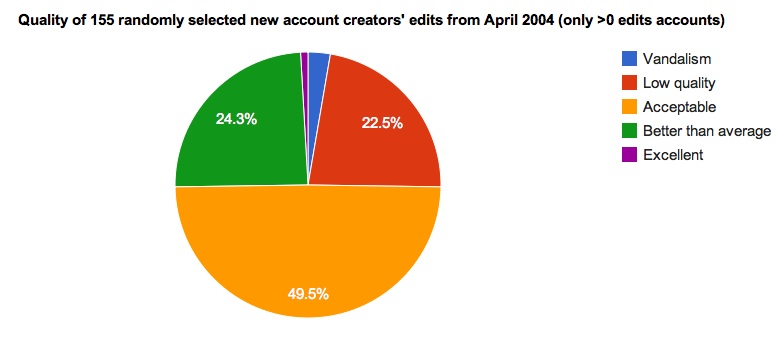

In order to investigate whether this has changed over time, we took a similar cohort from the same period in April 2004 and made the same qualitative assessment.

The key thing to note in comparing the two samples is that the percent of acceptable edits made by newbies did not dramatically decrease from 2004 to 2011. That’s despite the fact that the bar for quality has been raised over time, and that there are arguably fewer obvious contributions to make now that Wikipedia has grown by millions of articles.

Another relevant fact to consider is that while both cohorts are of 155 new editors, it took several days for that many new editors to join Wikipedia in 2004. In 2011, our sample is a tiny slice of the new editors arriving every month. For example: on Monday of this week more than 1,800 editors joined English Wikipedia and made at least one edit. On the equivalent day in 2004 there were only about 60.

Our sample strongly suggests that thousands of new editors still join Wikipedia every month with valuable contributions to make. Ensuring that we welcome these newcomers and show them the ropes is a top priority for ensuring Wikipedia’s continued success in our second decade.

(This is the first in what will be a new series of blog posts coming out of the Community Department at the Wikimedia Foundation. Starting now and continuing through the summer, we will be sharing the questions, experiments, and fresh data that currently drive our work. While you’ll get an inside look at what we’re doing, our numbers and analysis are still evolving and should be taken with a grain of salt.)

Steven Walling

Wikimedia Foundation Fellow, on behalf of the Community Dept. – especially Philippe Beaudette, James Alexander, and Maryana Pinchuk.

Can you help us translate this article?

In order for this article to reach as many people as possible we would like your help. Can you translate this article to get the message out?

Start translation